To Depart from Clemency: A DEF CON 2017 Retrospective

Another year, another DEF CON. This year, from the far reaches of LegitBS’s wild imagination came an architecture so bizarre and so confusing that it was actually pretty good. Over the course of three days, we were introduced to cLEMENCy, an intriguing RISC architecture that sported 9-bit bytes, middle endianness, and reversible stacks. It took many sleepless nights, but once again the were able to tool and exploit our way to a tight victory. As a member of the Plaid Parliament of Pwning, here is an overview of the year’s biggest CTF from my perspective.

Initial Trepidation

In August 2016, following the presentation of the DEF CON CTF 2016 results, the Legitimate Business Syndicate (often called LegitBS or LBS) announced that for their final year as the hosts of DEF CON’s premier competition, they would be debuting a custom architecture that Lightning (creator of the world’s most terrifying CTF pwnables) had been working on for two years prior. The reactions to this news were, as one might imagine, mixed. As someone who first learned assembly programming on an M6800, I was excited about getting to use an architecture more RISC-like than x86. Other members of the PPP were significantly less enthused, fearing that the custom architecture would render all of our existing tools useless, and that we would be unable to prepare at all for the following year’s competition. While these fears were justified to a certain extent, LegitBS still pulled off an incredibly fun and exciting game.

cLEMENCy and the Proliferation of Terrible Acronyms

At 9:00 am on the Thursday of DEF CON 25, the Legitimate Business Syndicate tweeted out a link to the official documentation for cLEMENCy — the LegitBS Middle Endian Computer Architecture. While the wonderful folks at LBS might not understand how acronyms work, they apparently have a great sense of humor. Upon downloading and opening the cLEMENCy manual, we discovered that this architecture not only — as the name suggests — is middle endian, but also makes use of 2-byte and 3-byte words composed of 9-bit bytes, which our team and several others lovingly came to refer to as nytes.

As an aside, note that since 9 is not divisible by 4, it doesn’t make much sense to represent nytes using hexadecimal. Instead, we will use octal where each digit represents 3 bits, resulting in a nyte being exactly 3 octal digits. For clarity, I will use a leading 0x (as in 0xB33F) to denote a number in its hexadecimal representation and a leading 0 (as in 0755) to denote a number in its octal representation. With this in mind, let’s consider what it means to be middle endian. In the 2 nyte word case, middle endianness is just a swap of the order of the nytes. For instance, if you wanted to write the value 0111222 to somewhere in memory, we would actually need to store in our register the number 0222111. This is similar to little endianness. However, when dealing with 3 nyte words, we store the most-significant nyte in the middle position, followed by the middle-significance nyte in the first position, and finally the least-significant nyte in the final position. For example, consider the numeric value 0111333777; to store this 3 nyte value in a register or in memory, we would store the value 0333111777. Note that the 2 nyte endianness swap is really just a special case of the 3 nyte endianness swap where the last nyte is just ignored.

Despite the obvious headaches that arise from 9-bit bytes and middle endianness, the rest of the architecture was rather sane. I would highly encourage reading the official documentation but the general gist is that it has 32 registers of 3 nytes each, memory that’s partitioned into 1024 nyte pages, and special memory regions for network send, network receive, clock data, competition flags, interrupt handlers, and non-volatile RAM. In addition, the instructions were variable length, ranging from 2 nytes to 6 nytes each. By and large these instructions are of a similar format to most other architectures. For each “command” you have multiple varieties that change how the values are loaded, the size of the arguments, and other similar attributes. There are a few interesting instructions, however. Among these are DMT, or Direct Memory Transfer”, a memcpy-like instruction that allows moving arbitrary amounts of data from one address to another.

Now that we had the architecture available to us, we were able to dive headfirst into tooling.

Sharpened By the Fight

Although the competition technically started on Friday at 10:00 am, since the cLEMENCy details were released on Thursday morning, it was essential that everyone begin preparing tools as soon as possible. Unfortunately, despite my personal excitement about cLEMENCy, I found myself once again leaning toward the defensive side of the game. There are a number of reasons for this, enough to which I could devote an entire article. Instead, I will try to describe the PPP’s tooling efforts from my perspective. Since the only tools that LBS provided were a programmer’s manual, an emulator, a debugger, and a sample binary, we were forced to build anything else we wanted ourselves. Furthermore, the use of nytes instead of bytes meant that a lot of existing tools that could help us in our tooling were either useless or required more time to modify than we were comfortable allotting. For instance, we were considering the possibility of modifying Binary Ninja to support cLEMENCy, but a quick glance at the disassembler’s slack channel indicated that it would be incredibly difficult.

Instead, two members of our team had been preparing Snowball, an IDA plugin and disassembler for the RISC-V architecture, which they were then able to modify to support cLEMENCy with only a couple hours worth of work. We also had team members rush to build an assembler as well as clones of standard unix tools that could operate logically on cLEMENCy binaries.

Sour Lemons

As I mentioned earlier, I once again found myself working on and supporting our defensive efforts. As in previous years, during the competition we were to receive packet captures of all network traffic entering and exiting our host machine, arriving each round on a three-round delay (that is, arriving every 5 minutes on a 15-minute delay). Since these could get huge — some rounds we recorded over 3,000 distinct conversations — we needed pretty powerful infrastructure to manage them. In advance, we had written a packet capture management framework called Aviary which could handle all of the processing we wanted to perform on the network traffic and present it in an intuitive and friendly interface. This was perhaps the most useful of our defensive tools, because it allowed one or two people to effectively monitor all of our network traffic.

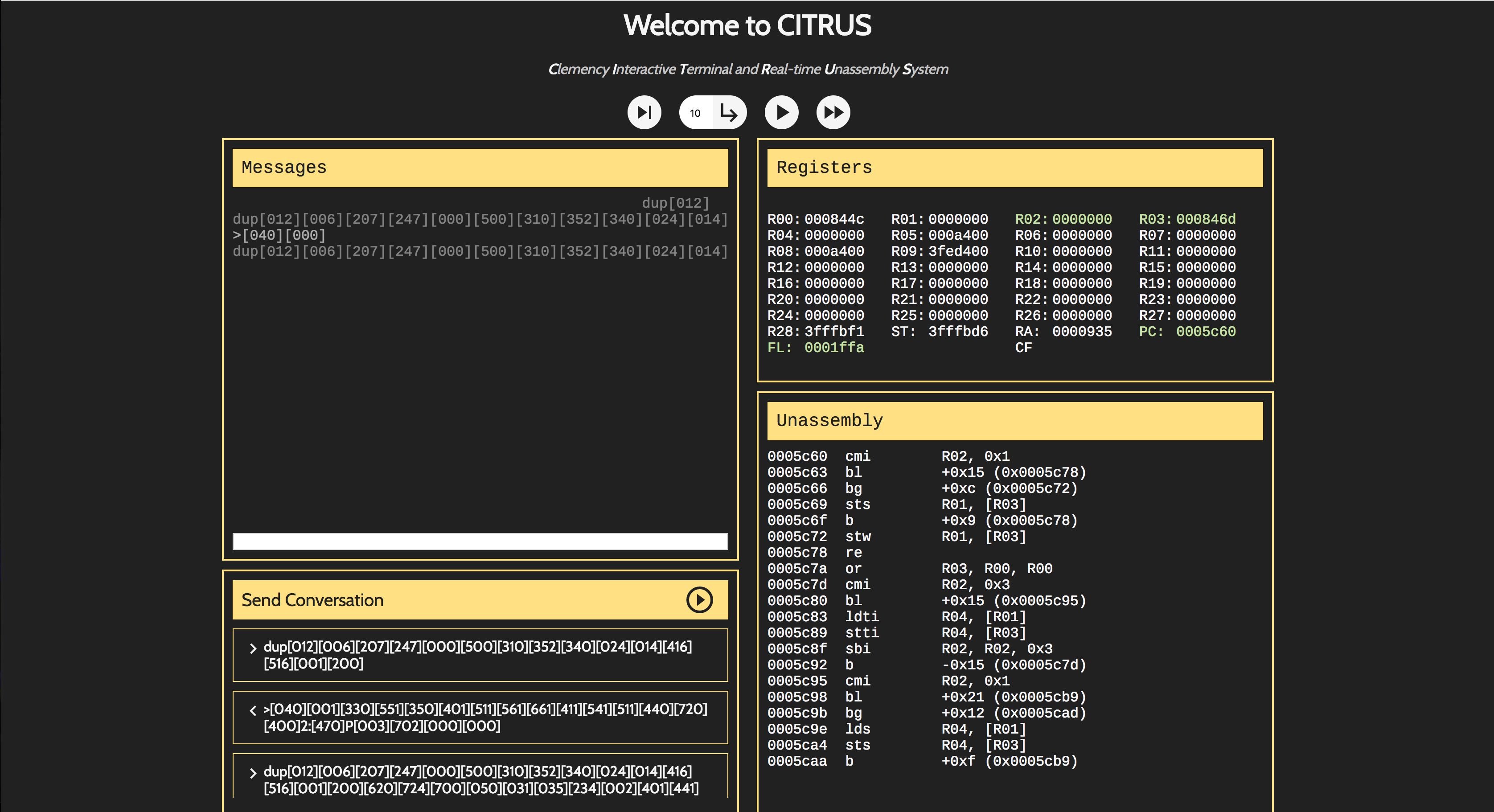

However, once we encountered suspicious network traffic we needed a way to confirm that we were in fact being attacked. To address this problem, I spent most of my time working on CITRUS, the Clemency Interactive Terminal and Real-time Unassembly System.

The basic principle behind CITRUS was to act as a web-based frontend for the cLEMENCy debugger. While the debugger was reasonably powerful (and was more than I had expected LBS to provide us with) it was still a pain to use and couldn’t easily interface with our existing systems. To address this, CITRUS would allow a web-frontend to automatically spawn a debugger instance on an AWS server farm somewhere and then talk to it via websockets. The hope was that CITRUS would make debugging cLEMENCy programs easier while simultaneously allowing us to replay network traffic and examine it for bugs and flags.

What I had originally intended to be a quick little debugging tool ended up becoming my primary focus for the duration of the competition. When I had sketched out a design of CITRUS on paper, I hadn’t considered the difficulties presented by talking to a system that packed its data differently than the rest of the world. Specifically, in order to use the debugger and emulator simultaneously, it was necessary to communicate with the debugger over STDIN/STDOUT and the program itself over a TCP connection. This seems fine at first, until you consider the event in which you need to send a multiple of nytes not divisible by 8 over a TCP stream. Because the protocol expects to send whole bytes, if cLEMENCy wants to write 5 nytes, it has to write 45 bits of data, followed by 3 bits of padding to make it possible to break the total number of bits written into an even number of bytes.

This alone isn’t an issue — to read a packet of packed nytes, one simply needs to read bits until there aren’t enough to fill a full nyte, then drop the remaining padding. Unfortunately, TCP stacks often try to be intelligent to improve performance. Thus, if two packets come in at the same time, they will be buffered for a short period of time, resulting in them being automatically concatenated before being presented to the application. Once this happens with packed nytes, the stream becomes unreadable — there are an unspecified number of 0 bits used for padding distributed in the buffer where the packet boundaries used to be. My first implementation of CITRUS, which used the Node.js Net module to communicate directly with the emulator, suffered from this exact problem. After spending several hours trying to find a way to deal with this, I ended up spinning up a separate python process to serve as a proxy for communication with the emulator since its TCP stack does not do such buffering.

While this wasn’t the only problem that arose during development, I like it because it illustrates the random problems you can face while working with a system like cLEMENCy. Unfortunately, as a result of some of these issues, CITRUS never really met its original goal. The system worked well locally, but when it was spinning up twenty different emulators, it often choked and died. However, it did prove incredibly helpful for testing network conversations for exploit behaviour, and we were able to use it in a number of ways to aid our defensive stack.

To Those About to Hack…

Once the competition began Friday morning, it was non-stop work. On the competition floor all teams had up to 8 members seated at their team’s workspace. This was the only way to connect to the game’s infrastructure, so while it was not required for team members to play on the floor itself, there was a certain implicit requirement for some portion of the team to be present. In practice, we saw most teams had 6 to 8 people in the competition room for the duration of the game. From the game start onward, rounds progressed every 5 minutes with each team able to submit one flag for each of an opposing team’s services in any given round. On Friday, the game lasted from 10:00 am to 8:00 pm, with a total of 3 challenges (“rubix”, “quarter”, and “internet3”) released that day. The first challenge, “rubix”, was challenging because it was nearly impossible to patch without violating the service functionality tests (which causes a team to forfeit all points associated with the problem during a failed round). The second binary, “quarter”, had the opposite issue wherein by the end of the second day everyone had patched out all of the major bugs. The remaining program, “internet3”, had much more action with exploits being thrown all the way until the game’s end.

Likewise, on Saturday the game lasted from 10:00 am to 8:00 pm with five more challenges released (“babysfirst”, “half”, “legitbbs”, “picturemgr”, and “trackerd”). However, much to our surprise, in spite of the challenge additions, none of the existing challenges were removed. This made it much more difficult to play defense, because it required us to stretch ourselves thin monitoring several different services. Finally, on Sunday the game lasted only from 10:00 am to 2:00 pm with one final challenge (“babyecho”) being released, concluding a frenetic weekend.

…We Salute You

For a precise breakdown of everything that occurred over those three days, I would implore you to examine LegitBS’ data dump from the competition. While I’ve only paid it a cursory glance, you can see a rough graph of how scores progressed over time below:

#defcon25 CTF score graph. CC @LegitBS_CTF pic.twitter.com/UsukelGR24

— Robert Xiao (@nneonneo) July 31, 2017

As the graph shows, the game was very close, with us edging out HITCON by only about 3,000 points. In addition, all of the other teams played very well, and really made the competition exciting. Special Kudos to pasten for first-blooding us with picturemgr. I think we all got a good kick out of that.

With LBS’s departure, we’re not sure what the future of DEF CON CTF holds; however, regardless of what happens, DEF CON 2017 was a blast. Once again, thanks to all of the teams and to LegitBS for an incredibly fun weekend.